Most medical news hinges on a single, often misleading number: the p-value. The truth is, the 95% Confidence Interval is a far more powerful tool for seeing what a study really means for you.

- It reveals a study’s “range of uncertainty,” showing if a result is robust or just statistical noise.

- It helps you distinguish between dramatic-sounding “relative risk” and meaningful “real-world impact.”

Recommendation: Focus on the Confidence Interval’s range and the absolute numbers to critically evaluate any health claim before you trust it.

“Coffee Cures Cancer!” one week, “Coffee Causes Cancer!” the next. If you follow health news, this dizzying cycle of contradictory headlines is frustratingly familiar. As patients and journalists, we’re told to look for “statistically significant” results, a concept usually tied to a mysterious number called the p-value. For decades, a p-value less than 0.05 has been the gatekeeper of medical truth, the marker that separates a “failed” study from a “successful” one.

But what if focusing on the p-value is like judging a symphony by a single note? It tells you something, but it misses the entire performance. The real key to unlocking a study’s true meaning—and its crucial limitations—is the often-overlooked 95% Confidence Interval (CI). This isn’t just another piece of jargon; it’s a practical ‘BS Detector’ that empowers you to see the bigger picture. The CI provides a range of plausible values for a result, revealing the true uncertainty and stability of a finding in a way a simple p-value never can.

This guide is designed to equip you with a statistical toolkit. We will move beyond the simple yes/no verdict of the p-value and learn how to use the Confidence Interval as our primary lens. We will deconstruct sensationalist claims, understand the vast difference between relative and absolute risk, spot the biases that distort medical literature, and ultimately, learn how to assess the real-world impact of any study. It’s time to read between the lines of medical news and become a more critical, informed consumer of science.

To navigate this complex landscape, this article breaks down the essential concepts you need to build your critical thinking toolkit. The following sections will guide you through each component, from understanding risk to spotting flawed research designs.

Summary: Beyond ‘Significant’: Why 95% Confidence Intervals Are the Key to Understanding Medical News

- Why a 10% Risk Reduction Sounds Better Than “3 Fewer Cases per 1000”

- Why Positive Studies Get Published 3x More Often Than Negative Ones?

- Observational Study vs RCT: Why the Gold Standard Costs More?

- The Correlation Mistake: Why Eating Almonds Doesn’t Cause Longevity

- How to Use Bayesian Reasoning to Interpret Positive Test Results?

- Why Following “Wellness Guru” Advice Could Hospitalise You This Winter?

- The “Normal” X-Ray That Missed Hairline Fracture Until 6 Weeks Later

- Deconstructing a Health Headline: Are Digital X-Rays Really ‘4x Better’?

Why a 10% Risk Reduction Sounds Better Than “3 Fewer Cases per 1000”

A new drug “reduces heart attack risk by 50%!” This headline sounds revolutionary. But what if your initial risk was just two in 1,000? A 50% reduction means your risk is now one in 1,000. The absolute risk reduction is only one fewer heart attack for every 1,000 people treated. This is the critical distinction between relative risk (the big, impressive percentage) and absolute risk (the real-world impact). Marketers and news headlines almost always use relative risk because it sounds more dramatic.

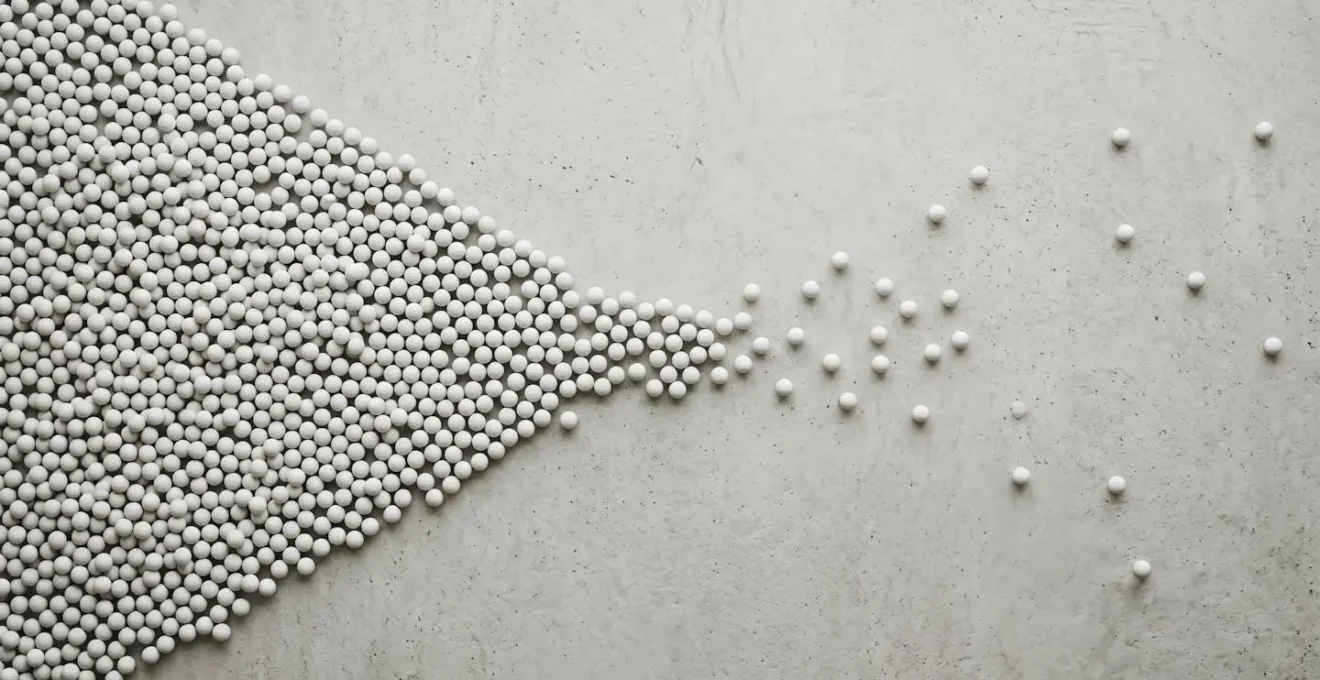

This extreme macro photograph contrasts the large proportional change of a “4x” claim with what can often be a small absolute difference in reality, urging viewers to question the scale and context of statistical claims.

To cut through this, statisticians use a powerful tool called the Fragility Index. As explained in a 2019 paper in JAMA Surgery, this index reveals the precise number of patients in a study whose outcome would need to flip (from non-event to event) to turn a “statistically significant” result into a non-significant one. A low fragility index means the conclusion is shaky. Shockingly, a landmark analysis of 399 trials found a median fragility index of just 8. This means changing the outcome for only eight people could erase the “significant” finding. A quarter of these studies had a fragility index of three or less.

The fragility index is the minimum number of patients whose status would have to change from a nonevent to an event required to turn a statistically significant result to a nonsignificant result.

– Tignanelli CJ, Napolitano LM, JAMA Surgery, 2019

This is where the 95% Confidence Interval shines. A very fragile study will often have a CI that is extremely wide or sits dangerously close to the “line of no effect.” It visually represents this instability, showing you that while the single best estimate might be a “50% reduction,” the true effect could plausibly be as low as 2% or as high as 80%. This “range of uncertainty” gives you a much more honest picture than a single p-value or a dramatic relative risk figure.

Why Positive Studies Get Published 3x More Often Than Negative Ones?

Imagine a world where newspapers only report on planes that land safely, never mentioning any that crash. Your perception of airline safety would be dangerously skewed. This is precisely what happens in scientific publishing, a phenomenon known as publication bias. It’s the tendency for researchers and journals to publish studies with “positive” or statistically significant results, while studies showing no effect (null results) are often left in a “file drawer.”

This visual metaphor shows how published studies (clustered on one side) can create an asymmetric and misleading picture of the evidence, because unpublished negative results (the sparse side) are missing.

The effect is not trivial. Extensive research on publication bias has demonstrated that papers with statistically significant results are three times more likely to be published than those with null results. This systematically distorts the medical literature, creating an overly optimistic view of how well treatments work. As one research team noted, many scientists mistakenly view negative results as a personal or professional failure, when in reality, a well-conducted study that rejects a hypothesis is a crucial scientific contribution.

Case Study: The Antidepressant Illusion

A stark example of publication bias comes from an analysis of antidepressant trials registered with the U.S. Food and Drug Administration (FDA). When researchers compared the studies submitted to the FDA with what was actually published in medical journals, the discrepancy was shocking. Of the trials submitted to the FDA, only 51% were positive. However, in the published literature, a staggering 91% of studies reported positive findings. Studies with negative or questionable results were either never published or were written in a way that spun them to appear positive. This created a public and professional perception that these drugs were far more effective than the complete body of evidence suggested.

As a reader, you must always assume that what you are seeing is the “best-case scenario” of the evidence. For every positive study you read about, there may be several unpublished negative ones you’ll never see. This is why a single “groundbreaking” study should be met with healthy skepticism. Real confidence comes from seeing similar results replicated by different teams over time.

Observational Study vs RCT: Why the Gold Standard Costs More?

Not all research is created equal. Understanding the hierarchy of evidence is fundamental to your BS Detector toolkit. At the bottom of the ladder are observational studies, where researchers simply observe a group of people without assigning any intervention. At the top sits the Randomized Controlled Trial (RCT), universally considered the gold standard for clinical research.

Randomized controlled trials (RCTs) are considered the gold standard for clinical research. The strengths of RCTs include the development of a prospective study protocol with strict inclusion and exclusion criteria, a well-defined intervention, and predefined endpoints.

– BMC Anesthesiology research team, BMC Anesthesiology, 2016

Why are RCTs superior? The key is randomization. By randomly assigning participants to either a treatment group or a control group (receiving a placebo or standard care), researchers can minimize the risk of confounding variables. These are hidden factors that can skew results, like age, lifestyle, or income. In an observational study, you might find that people who eat organic food are healthier, but you can’t be sure if it’s the food itself or if those people also happen to exercise more and smoke less. An RCT breaks this link.

The difference in reliability is significant. Because they can’t control for all confounding factors, systematic comparisons reveal that observational studies tend to overestimate treatment effects and show much more variability than RCTs. So why aren’t all studies RCTs? The answer is cost and complexity. RCTs are incredibly expensive and time-consuming to run, often costing millions of dollars and taking years to complete. Observational studies are far cheaper and faster, which is why they are so common. When you see a study in the news, one of your first questions should be: was this an observational study or an RCT? The answer will tell you a lot about how much confidence you should place in its findings.

The Correlation Mistake: Why Eating Almonds Doesn’t Cause Longevity

“Correlation does not equal causation” is a common mantra, but it’s a trap people fall into constantly. An observational study might find a strong correlation between daily almond consumption and a longer lifespan. The headline writes itself: “Eat Almonds, Live Longer!” But this conclusion is almost certainly wrong. It’s more likely that people who eat almonds every day also engage in a host of other healthy behaviors—they exercise, avoid junk food, have better access to healthcare, and may have a higher socioeconomic status. The almonds aren’t causing longevity; they are a marker for a healthier lifestyle. This is called confounding, and it plagues observational research.

The only reliable way to move from correlation to causation is with a well-designed Randomized Controlled Trial. The danger of relying on observational correlations is not just academic; it has led to major medical errors.

Case Study: The Hormone Replacement Therapy Disaster

Perhaps the most famous example of the correlation-causation fallacy is Hormone Replacement Therapy (HRT). For decades, observational studies consistently showed that post-menopausal women taking HRT had a lower risk of heart attacks. It was widely prescribed on this basis. However, when large-scale RCTs were finally conducted in the early 2000s, the results were shocking. The RCTs from the Women’s Health Initiative found that women on HRT actually had a slightly higher rate of heart attacks, strokes, and blood clots. The observational studies were wrong. It turned out that the women who opted for HRT in the earlier studies were, on average, healthier and of a higher socioeconomic status to begin with. Their healthy lifestyle, not the HRT, was protecting their hearts. The practice was dramatically and correctly reversed, but only after millions of women were exposed to unnecessary risk based on a faulty correlation.

Your 5-Point Study Credibility Checklist

- Identify the Study Type: Is it a powerful Randomized Controlled Trial (RCT) or a weaker observational study? Be extra skeptical of the latter.

- Check the Numbers: Look for the absolute risk reduction (e.g., “2 fewer events per 1000 people”), not just the dramatic-sounding relative risk (e.g., “50% reduction”).

- Examine the Confidence Interval: Is the 95% CI narrow and far from “no effect,” or is it wide and barely significant? The latter suggests a fragile result.

- Consider Publication Bias: Is this a single, surprising “breakthrough” study? Remember that it’s likely the most positive of many attempts, and negative results may be unpublished.

- Question Causation: If it’s not an RCT, what are the potential confounding factors? What else could explain the observed link besides a direct cause-and-effect relationship?

How to Use Bayesian Reasoning to Interpret Positive Test Results?

Our culture loves the idea of a single, definitive breakthrough. But science rarely works that way. A more realistic and robust way to think about evidence is through a lens called Bayesian reasoning. Instead of seeing a new study as the final word, a Bayesian thinker sees it as a piece of information that *updates* our prior beliefs. A single study is just a whisper, not a verdict. The real confidence comes from the accumulation of evidence over time.

This image of hands carefully stacking translucent discs captures the essence of Bayesian thinking: scientific confidence isn’t built on a single, opaque block of ‘proof’, but on the careful, cumulative layering of multiple pieces of evidence.

The need for this approach was powerfully demonstrated by the “replication crisis.” In science, a result is only considered robust if other independent teams can replicate it. However, a landmark 2015 study attempting to replicate 97 psychology findings found that fewer than 40% were successful. This doesn’t mean the original studies were fraudulent, but it highlights how many initial “significant” findings are likely due to chance, publication bias, or specific circumstances that don’t generalize. Relying on any single one of them would be a mistake.

A single study with a p<0.05 is just a starting point. From a Bayesian perspective, it only slightly shifts our probability. The real confidence comes from multiple studies showing similar results.

– Open Science Collaboration researchers, Nature Communications Psychology, 2023

When you see a new, surprising study, ask yourself: What was the consensus before this study? Does this new evidence radically upend everything, or does it just slightly shift our understanding? A truly revolutionary finding needs an extraordinary amount of evidence. This concept of the “weight of evidence” is your shield against overreacting to the latest headline. Look for consistency across multiple studies, ideally from different research groups, before you truly start to believe a claim.

Why Following “Wellness Guru” Advice Could Hospitalise You This Winter?

The modern wellness landscape is dominated by charismatic “gurus” who build massive followings on social media. They share compelling stories of personal transformation, promoting specific diets, supplements, or protocols as the secret to their vibrant health. While often well-intentioned, their advice is one of the most dangerous forms of medical misinformation because it is almost always built on the weakest form of evidence: the anecdote.

An anecdote is a study with a sample size of one (n=1). When a guru claims that taking 10,000 IU of Vitamin D daily cured their seasonal depression and prevented them from getting sick all winter, they are presenting an uncontrolled, unverified observation. They are falling for the correlation-causation trap in real time. Maybe it was the Vitamin D. Or maybe they also started exercising, sleeping more, or simply experienced a placebo effect because they strongly believed the treatment would work. There is no control group, no randomization, and no blinding to tell the difference.

Following this kind of anecdotal advice can be genuinely harmful. For instance, the very same high dose of Vitamin D that the guru swears by could lead to hypervitaminosis (vitamin toxicity) in another person, a condition that can cause nausea, weakness, and in severe cases, kidney problems and hospitalization. What appears to work for one person can be dangerous for another due to differences in genetics, diet, and underlying health conditions. This is precisely why we need large, controlled studies like RCTs—to understand the average effect and the range of outcomes across a diverse population, not just in one enthusiastic individual.

The wellness guru’s narrative is powerful because it’s a story, and humans are wired to respond to stories. But when it comes to your health, you need data, not just a good story. Applying your BS Detector toolkit here is crucial: recognize that personal testimony, no matter how convincing, is not reliable evidence. Always question the source of a claim and favor systematic research over individual anecdotes.

The “Normal” X-Ray That Missed Hairline Fracture Until 6 Weeks Later

You fall and hurt your wrist. The doctor takes an X-ray and tells you, “Good news, it’s normal. No fracture.” You’re relieved, but the pain persists. Six weeks later, a follow-up X-ray clearly shows a healing hairline fracture that was invisible on the first image. How could a “normal” test miss something so important? This scenario illustrates a fundamental concept in medical testing: no test is perfect, and its true performance is best described by a confidence interval.

Every diagnostic test is evaluated on its sensitivity (its ability to correctly identify those with the disease) and specificity (its ability to correctly identify those without the disease). A headline might boast about a new test with “90% sensitivity.” This sounds great, implying it only misses 10% of cases. However, that 90% is just a point estimate—the single best guess from the data.

The 95% Confidence Interval gives us the crucial “range of uncertainty” around that number. For instance, rigorous statistical analysis of diagnostic accuracy shows that a test reporting 90% sensitivity might have a 95% confidence interval of [75% – 98%]. This tells a much more honest story. It means that while the best estimate of the test’s sensitivity is 90%, its true performance in the wider population could plausibly be as low as 75% or as high as 98%. In a worst-case scenario, this test could miss one in four fractures, not just one in ten.

This is a perfect, practical application of the CI. It forces us to confront the inherent uncertainty in medical diagnostics. A narrow CI (e.g., [88% – 92%]) gives us confidence that the point estimate is reliable. A wide CI, like in our example, is a major red flag. It signals that the study may have been too small or the results too variable to be certain of the test’s true performance. It’s the difference between a precise measurement and a wild guess.

Key Takeaways

- Focus on absolute risk reduction (e.g., “3 in 1000”) not relative risk (“10% less”) to gauge real-world impact.

- A single “positive” study is often misleading due to publication bias and statistical fragility; look for replication and consistency across multiple studies.

- The 95% Confidence Interval is your best tool: a wide range or one that includes “no effect” is a major red flag indicating an uncertain or fragile result.

Deconstructing a Health Headline: Are Digital X-Rays Really ‘4x Better’?

Let’s put our entire toolkit to the test with a final, plausible headline: “New Study Finds Digital X-rays Are 4x Better Than Film at Detecting Early Fractures.” This claim sounds definitive and impressive. But as a newly-minted critical reader of science, you know to ask deeper questions. This is no longer a simple statement of fact; it’s a puzzle to be deconstructed.

First, you apply the lesson from our discussion on risk: Is “4x better” a relative or absolute improvement? It’s clearly a relative claim. Your next step is to search the study for the absolute numbers. Let’s imagine you find that older film X-rays detected, on average, 2 out of every 1,000 subtle hairline fractures. The new digital X-ray, in the same scenario, detected 8 out of 1,000. It is, indeed, four times better. However, the absolute improvement is only 6 additional fractures detected for every 1,000 X-rays taken. This is still a valuable improvement, but it puts the “4x” claim into a more sober, real-world context.

Second, you activate your Bayesian thinking and awareness of bias. Is this the first study to show this? Is it a small study from the manufacturer of the new digital X-ray machine? A single, industry-funded study should be viewed with immense skepticism compared to a series of independent replications. And was this a high-quality RCT, or a weaker observational study that might be influenced by confounding factors (e.g., newer digital machines being used by more skilled radiologists at better-funded hospitals)?

Finally, and most importantly, you look for the 95% Confidence Interval on that “4x better” claim. One study might report a CI of [2.5x to 6.0x]. This is a fairly narrow range, giving you confidence that the digital X-ray is truly and robustly superior. But what if the CI was [1.1x to 15x]? This is a much less reliable result. While the “best guess” is 4x, the true effect could be as low as a marginal 10% improvement (1.1x) or as high as a massive 15x improvement. This wide range tells you the result is not precise and needs more research. It shows you the fragility of the finding.

Now you have the toolkit. The next time you see a sensational health headline, don’t just accept it. Apply these principles, look for the confidence interval, and empower yourself to distinguish between credible science and misleading noise.